Originality.ai is a software service that has an AI content detector and also comes with a plagiarism detector.

There is a lot of buzz these days when it comes to AI (artificial intelligence) writers. When ChatGPT was first released, the world was shocked by how it could produce unique and professionally written content - almost as if it was written by a human.

However, there is a concern with this type of content created at scale for bloggers, business owners, and anyone that relies on content that gets indexed on search engines.

Apparently, Google already has an algorithm in place to detect AI content. What do you think is going to happen? If you use AI content without editing it, I can see soft penalties handed out which will result in lost rankings.

So how do you avoid this problem? Enter Originality.AI, an AI content detector.

This is going to be a very comprehensive review of Originality.AI with real live tests and no fluff.

I ran several tests with Originality Ai using my own content from this very blog (uniquely written by me) and also using ChatGPT and Jasper AI. Can this tool detect AI-written content? How does it perform when analyzing human written content?

My Originality.ai Rating 4.5 / 5

Originality.ai is the best AI content detector built specifically for web publishers. The accuracy is strong, the pricing is fair, and the feature set has grown into something genuinely useful well beyond detection alone.

I'm deducting half a star for the false positive issue — it does occasionally flag human-written content as AI-generated, which can create friction if you're using it to vet freelancers. That said, no AI detector gets this right 100% of the time, and Originality.ai manages the problem better than most of its competitors

What is Originality.Ai?

Originality.ai is an AI content detection and content quality platform built for web publishers, content agencies, and SEO professionals. It launched in 2022 as a tool to detect whether content was written by AI or a human, paired with a plagiarism checker.

Since then it's grown into a full editorial suite that includes a fact checker, readability scorer, site-wide scanner, Chrome extension, and a feature called Deep Scan that doesn't just flag AI content — it shows you how to fix it.

The company is based in Collingwood, Canada and was founded by Jon Gillham, who has a background in content site building and SEO. He's been unusually transparent about what the tool can and can't do, which I think is worth mentioning in a space full of exaggerated accuracy claims.

The core use case for most people reading this: you're producing content with AI assistance, or you're managing writers who might be, and you want to know how much of it would be flagged as AI-generated before it goes live on your site. Originality.ai is the most purpose-built tool for that specific problem.

How Does Originality.ai Work?

Originality.ai uses machine learning models trained on a large labeled dataset of both human-written and AI-generated text. It analyzes patterns like perplexity — how predictable the text is — and burstiness, which measures variation in sentence complexity. The result is a probability score showing what percentage of the content reads as AI-generated, with highlighted text showing exactly which sections are flagging.

What sets it apart from many competitors is that it was built specifically for web content, not academic writing. Turnitin, for example, was designed for detecting plagiarism in student essays and is only available through institutions. Originality.ai was designed for the kind of content that gets published on blogs, affiliate sites, and news outlets — and you can just sign up and start using it.

It offers four detection models: Lite (designed for content with some light AI editing), Turbo (targets heavily AI-edited text), Academic (for polished AI-assisted writing), and Multilingual (for non-English content). Each one is calibrated for a different tolerance level, so you can pick the right model depending on how strictly you want to screen.

What Features Does Originality.ai Have?

AI Content Detector

The core feature. Paste in text or submit a URL and you get back a percentage score showing how much of the content reads as AI-generated. The highlighted view shows exactly which sentences are flagging as AI and which are reading as human-written. You're not just getting a number — you're seeing where the problems are.

Detection covers all the major AI writing tools including ChatGPT, GPT-4o, GPT-5, Claude 4, Gemini 2.5, DeepSeek, Llama, and others. Originality.ai has published third-party verified accuracy studies showing 97–99%+ accuracy across the latest models, which is the strongest claim in the market that's actually backed by external research rather than self-reported figures.

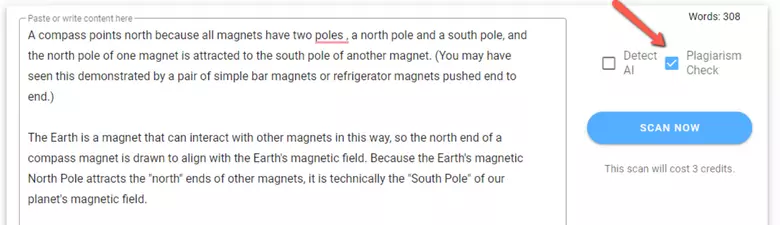

Plagiarism Checker

The plagiarism checker scans for duplicate content across the web and returns a score along with a list of matched sources. I ran my own comparison against Copyscape back in 2023 (see the test section below). In short: they're roughly comparable, with Copyscape returning more total matches on that particular piece. For most use cases — screening incoming freelance work before publishing — Originality.ai's plagiarism checker is more than sufficient, and you get it combined with AI detection in a single workflow.

Combined AI + plagiarism scans cost 2 credits per 100 words instead of 1. Worth doing when you're reviewing anything coming from an outside writer.

Deep Scan

This is the feature most people still don't know about, and I think it's the most useful addition since the original launch. Deep Scan doesn't just tell you that content is AI-written — it explains why specific passages are flagging and gives you concrete suggestions for how to rewrite them to sound more natural. It's detection combined with an editing guide.

I feel like this changes the use case entirely. You're not just auditing content, you're getting a clear path to fix it. For anyone running a content operation who uses AI as a first draft tool, this is worth the subscription cost on its own. Back in 2023 I was running flagged content through Jasper's Content Improver paragraph by paragraph to try to get the scores down. Deep Scan essentially does that guidance for you now.

Site Scanner

The site scanner lets you enter any domain and crawl the entire website to audit how much content reads as AI-generated, page by page. You can run it on your own site to find published pages that might be at risk, or on a competitor's site to see what they're working with.

For anyone managing a site that's gone through a Google algorithm hit, being able to audit your own content at scale is a significant time-saver. Checking pages one by one manually is just not practical at any meaningful volume.

Chrome Extension

The Chrome extension brings AI and plagiarism detection into Google Docs and any web page you're browsing. You can scan the page you're on or select a piece of text and check it on the spot.

The standout feature inside the extension is Writer Replay — it plays back the writing process character by character using a document's revision history in Google Docs. A naturally written document shows typed text, pauses, and edits. An AI-generated one shows a massive block of text pasted in all at once. If you're managing remote writers and accepting Google Docs submissions, this is one of the most useful vetting tools I've come across.

Fact Checker

The fact checker flags claims in your content and provides context or citations for those claims. It won't catch everything, but it's a useful first pass for catching obvious hallucinations before they go live — especially if you're working from AI-generated first drafts that may have invented statistics or misattributed quotes.

Readability Checker

The readability tool scores your content based on an analysis of 20,000+ search results to identify what readability scores correlate with ranking in Google. It's not a groundbreaking standalone feature, but having it in the same workflow as your detection scan means one less tool to juggle.

Content Optimizer

A more recent addition that provides SEO suggestions alongside GEO (generative engine optimization) suggestions — meaning it also looks at how your content might perform in AI-driven search results like ChatGPT, Perplexity, and Google's AI Overviews. I think that's a smart direction. As search shifts toward AI assistants, optimizing for LLM visibility is becoming a real concern for anyone building content-driven traffic.

Team Management and API

For agencies and larger operations, Originality.ai has role-based team access — Admins, Managers, and Editors each have different permission levels. All scans are logged in an audit trail, which matters if you need to demonstrate editorial due diligence to clients. The API lets you integrate detection directly into your content management workflow so you can automate screening rather than doing it manually. API access requires the Enterprise plan.

How Accurate Is Originality.ai? — My Test Results

Strong — especially at detecting human-written content, and solid on AI-written content with a few misses.

To see how Originality.ai's performance is, I fed the tool the following:

- 10 pieces of AI-written content

- 10 pieces of human-written content

The data I got from these tests will be used to see how accurate Originality.Ai actually is.

First Test - AI Written Content Checked With Originality.AI

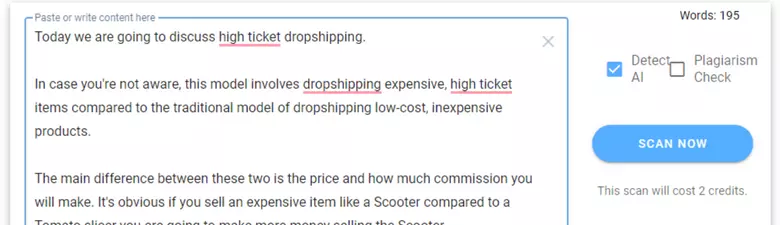

To conduct this test, I will use popular AI generated content using the tools ChatGPT, Jasper Ai and Copy.Ai. I will generate some text based on random queries in different subjects. I will then copy and paste that text into Originality.ai. I will try to keep the text no more than 250 words to keep things consistent.

Originality.AI - Testing The Tool

PASSED = Originality.AI has detected this AI content as AI Content

Failed = Originality.AI did not detect the majority of AI content to be AI.

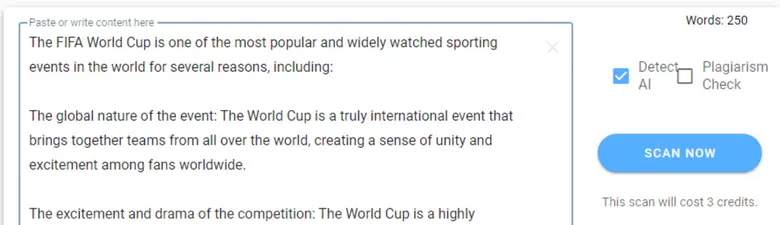

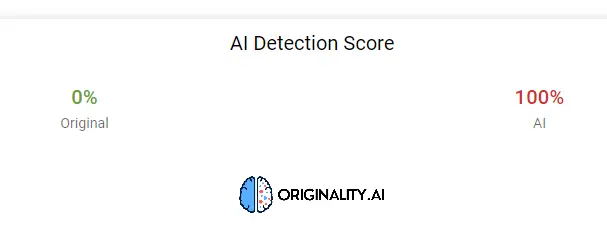

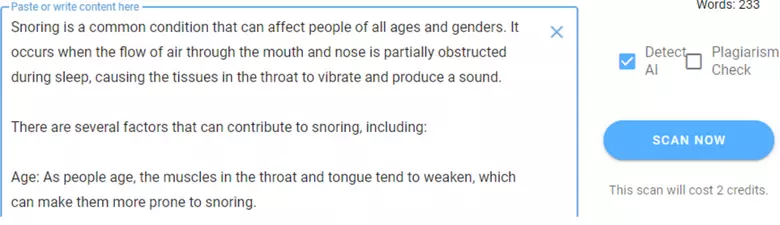

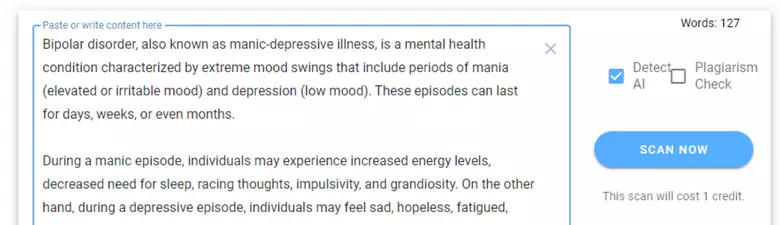

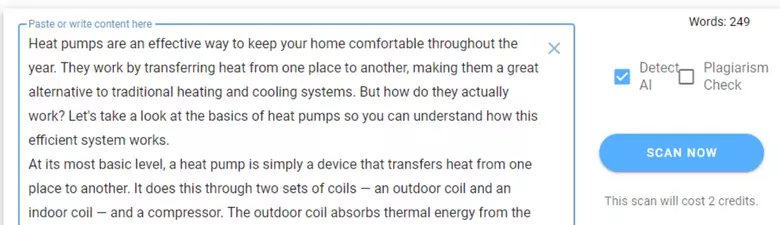

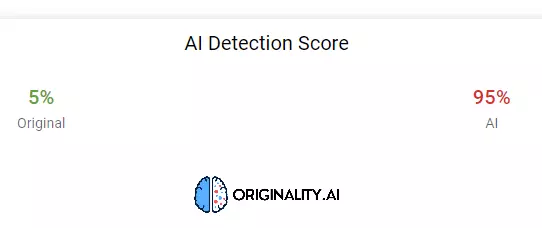

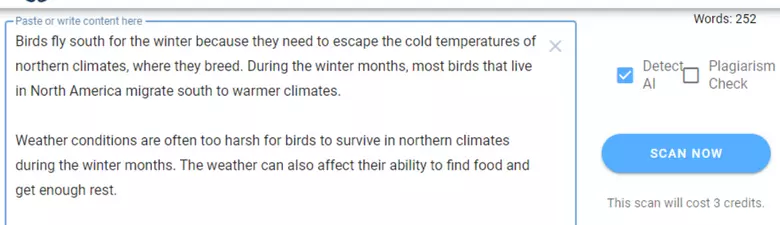

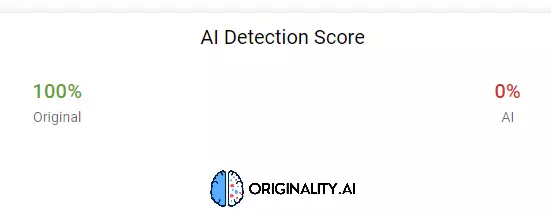

Test #1 - ChatGPT

100% AI detected - test passed - A lot of editing will need to be done before posting this content.

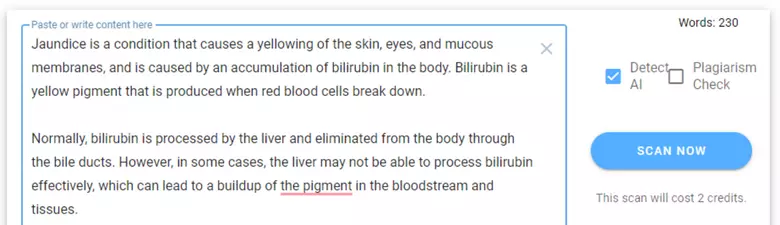

Test #2 - ChatGPT

100% AI - Test Passed - lots of editing to do before posting!

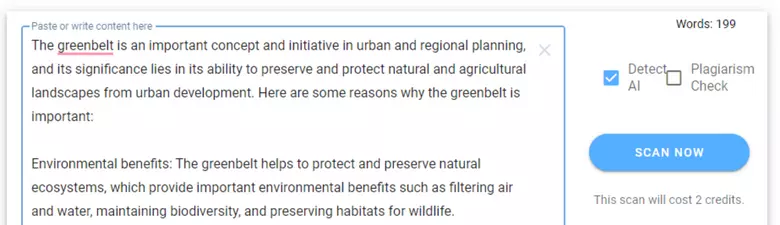

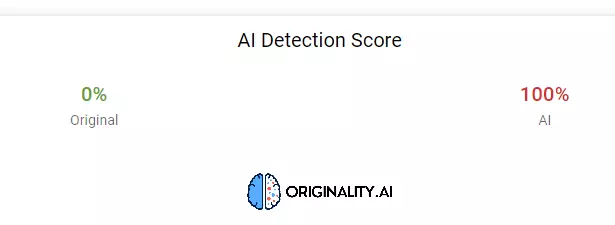

Test #3 - ChatGPT

86% AI - A substantial amount of editing needed before posting

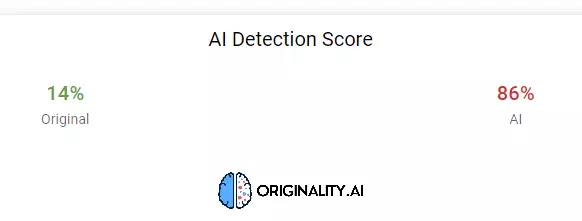

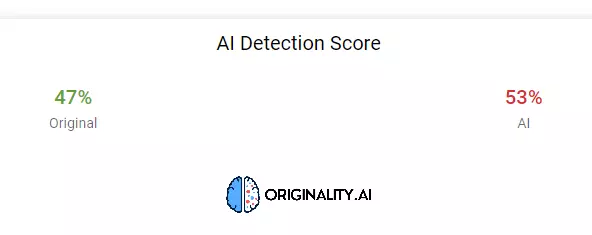

Test #4 - Chat GPT

53% AI - I'm calling this one neutral but editing will still need to be done before posting the article

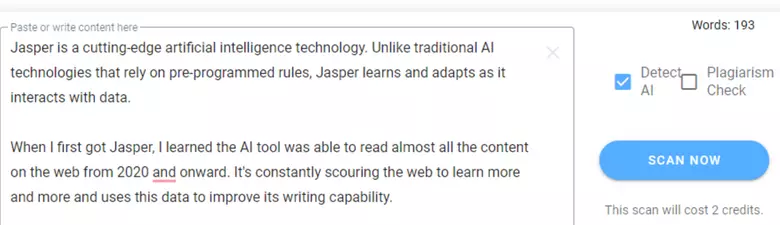

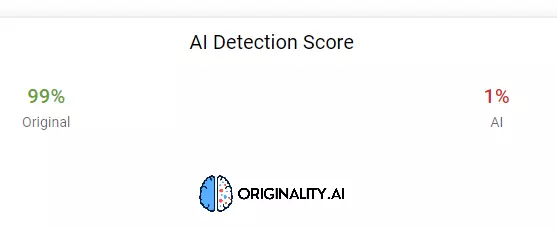

Test #5 - Jasper AI

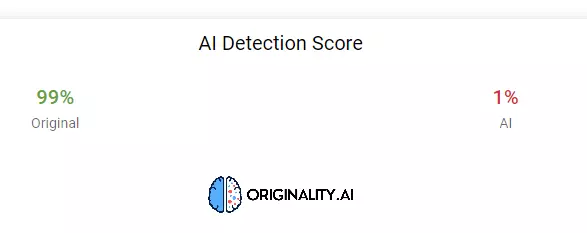

1% AI - Test Failed - Showing 99% original but this is 100% Ai written by Jasper

Test #6 - Jasper AI

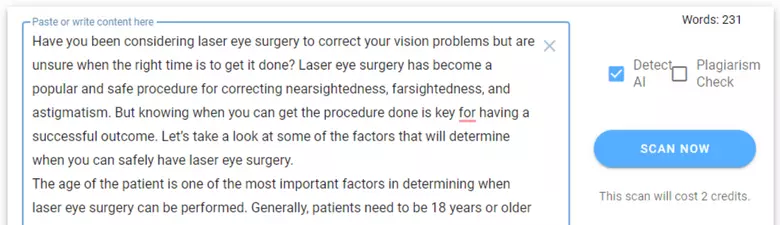

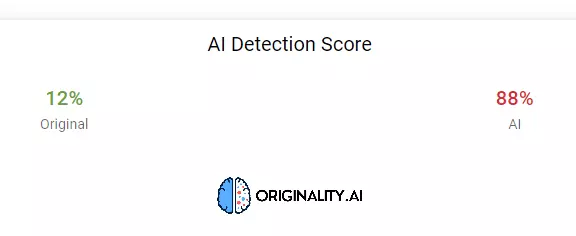

88% AI - Test Passed and edits will need to be made before going live

Test #7 - Jasper AI

95% AI - Test Passed - Plenty of editing needed before posting!

Test #8 - Copy AI

98% AI - Test Passed - Still more editing needed!

Test #9 - Copy AI

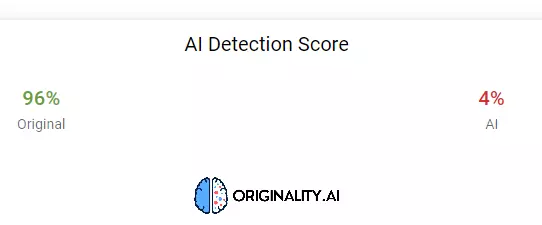

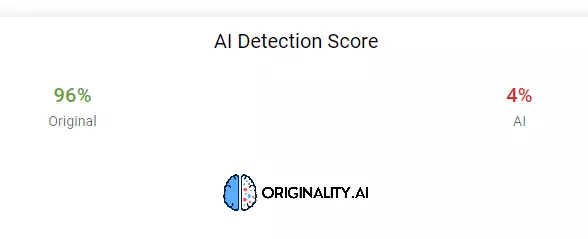

4% AI - Test Failed - Shows 96% original but content is 100% written by Copy AI

Test #10 - Copy AI

99% AI - Test Passed - This article needs serious attention and editing!

Test Results Summary

Originality.ai detected AI content in 8 of the 10 tests — an 80% hit rate. Both failures came from specific tools: one Jasper piece scored just 1% AI, and one Copy.ai piece scored just 4% AI, despite both being fully AI-written. So it's not infallible, but it caught the clear majority of what I threw at it. The ChatGPT results were the strongest — 3 of 4 came back at 86% AI or higher.

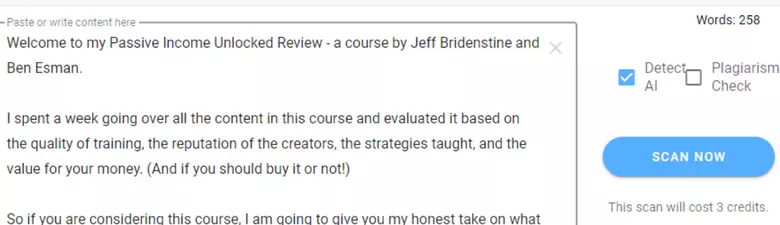

Second Test - Hand-Written, Original Content Checked With Originality.AI

For this test I took 10 pieces of content written entirely by me — no AI involved — and ran them through the detector to see how many false positives it would generate.

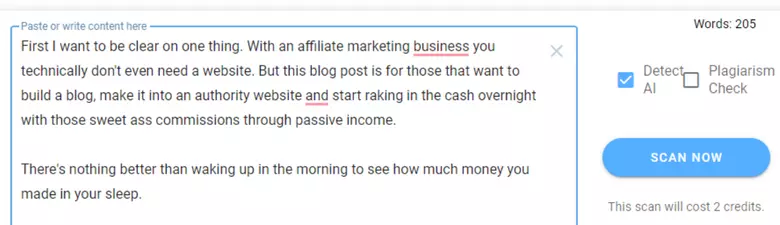

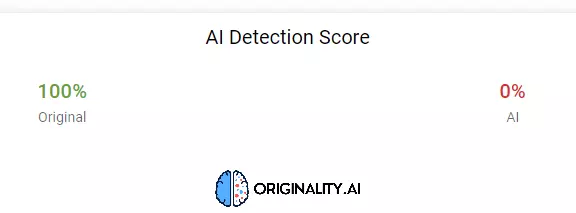

Test #1

100% Original! - Yes! Originality AI knows I am NOT a BOT! It passed the test.

Test #2

100% Original! - This is looking GOOD! Passed!

Test #3

100% Original and passed the test - 3 for 3 so far.

Test #4

96% Original and passed the test - Close enough but not sure why it thinks 4% is AI.

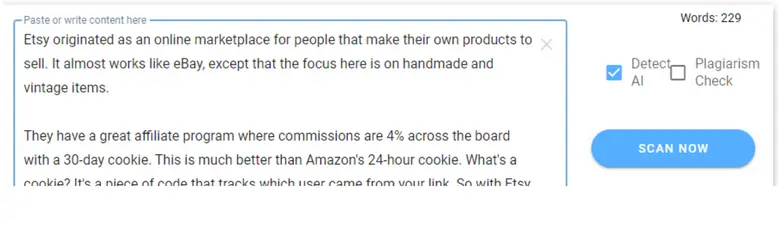

Test #5

93% Original but it thinks AI wrote 7% of the content. Still a pass as it sees it as 93% original.

Test #6

100% - That's much better, passed with flying colors.

Test #7

80% - This one didn't do as well and not sure why, but at 80% original, i'll take it.

Test #8

98% - Almost perfect which it should have been, but hey 2% off is nothing.

Test #9

99% - Almost perfect! Maybe that 1% is because it doesn't like any mention of Jasper AI?

Test #10

92% - Almost perfect, just 8% is considered AI of which there isn't any.

Test Results Summary For Human-Written Content

Nine out of ten came back at 92% original or better. Most scored 96–100%. The one that surprised me was the 80% result — that content was 100% mine with no AI involved at all.

This is the false positive issue that any honest Originality.ai review needs to acknowledge. It happens. My advice: treat any score above 80% original as a pass on human-written content, and always use your judgment alongside the score rather than treating the tool as the final word.

What I found overall is that Originality.ai is actually better at evaluating human-written content than it is at catching AI-written content. It's very reliable at confirming that human content is human. The AI detection is strong but not perfect — which matches what independent testing has found across the market, too.

How Does the Plagiarism Checker Compare to Copyscape?

Very well — it's a legitimate alternative for most content screening use cases.

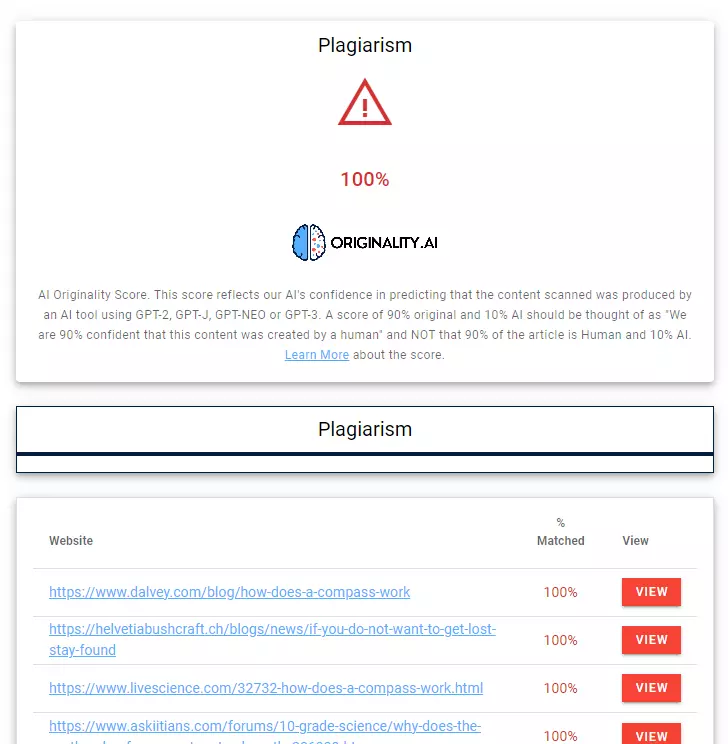

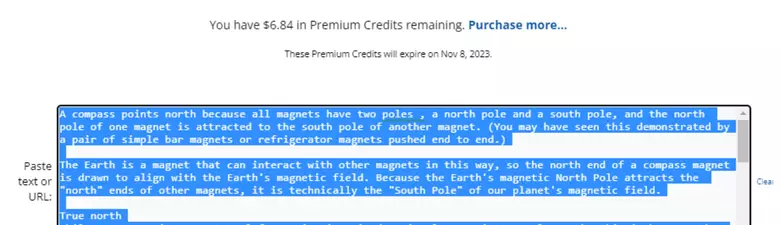

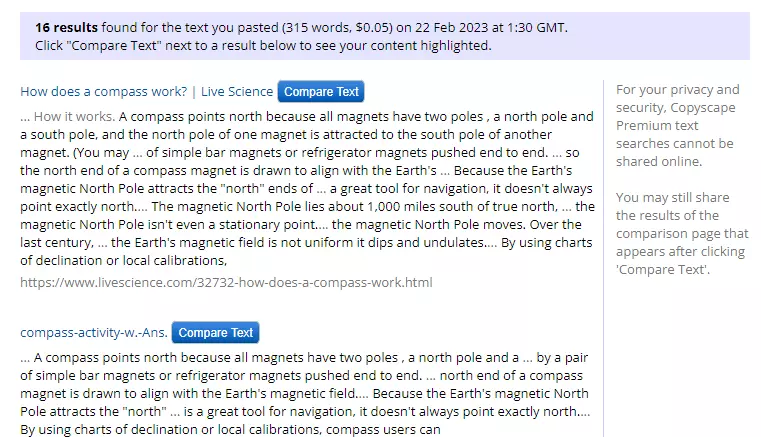

For this test, I grabbed a piece of content published on the web and ran it through both Originality.ai and Copyscape to compare.

And the results after the scan:

As is should report, it is showing 100% plagiarism. So Orginality.ai passed the plagiarism test with flying colors.

It even lists all the sources where it found the duplicate content that other people ripped off and posted elsewhere.

Up Next, Copyscape.

And the results...

Both tools correctly identified the content as plagiarized. The meaningful difference: Copyscape found 16 instances of duplication across the web while Originality.ai found 4. Copyscape went deeper on that particular piece.

That said, Originality.ai still returned a clear plagiarism result, which is the outcome that matters for most use cases. If plagiarism detection is your only need and you're already on Copyscape, I'd keep Copyscape. But if you're also checking for AI content, running both checks through Originality.ai in a single scan is a better workflow than paying for two tools separately.

What Do You Do When AI Content Gets Flagged?

Obviously, do not post this content. Google will likely detect it and if not now, in the future it will. I would imagine that Google will frown on AI written content so you need to make sure it's not detected.

So how do you do this?

You do this by editing the content. This next step will involve me editing a piece of content above that was detected as AI and see what score we can get.

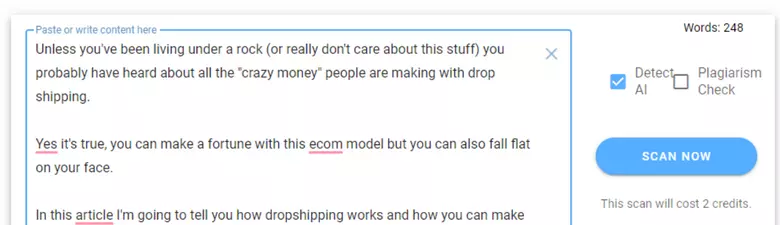

Test #1 Using Jasper's Content Improver Template

I thought i'd start off the lazy way and see if using the "Content Improver" template in Jasper AI by rewriting the text in "Test #1 ChatGPT".

Rather than dumping the whole 250 words in the tool, I took paragraph by paragraph, fed it into the content improver tool in Jasper and then pieced it all together to come up with a new document that basically said the same thing.

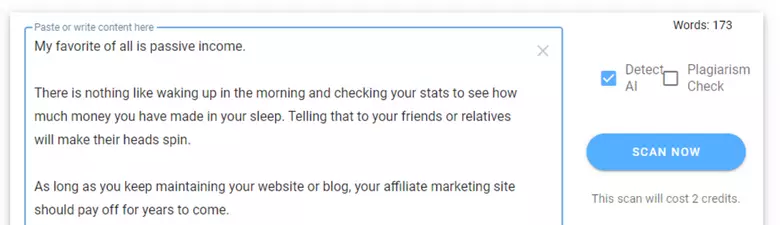

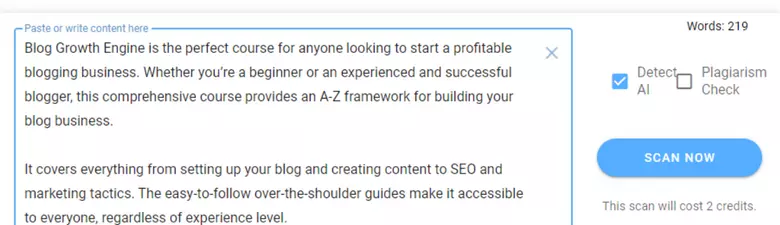

Here is the newly created ready to go in Originality.ai:

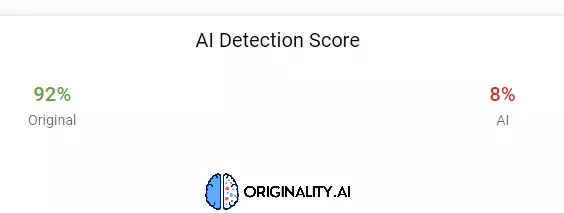

and here we go...

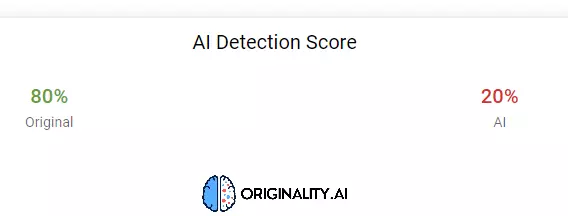

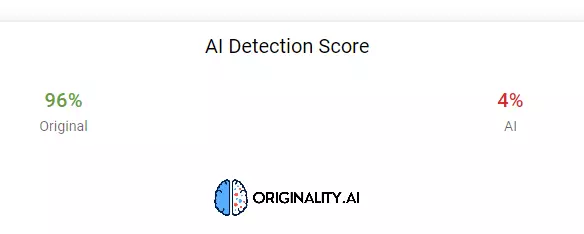

I must admit, I didn't expect 96% original content by doing this. So this looks to be an easy and quick solution should you need to fix your Ai detection score. But of course, you'll need Jasper for this to work. Get a free trial here.

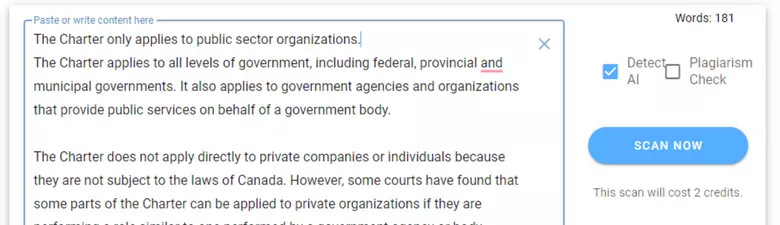

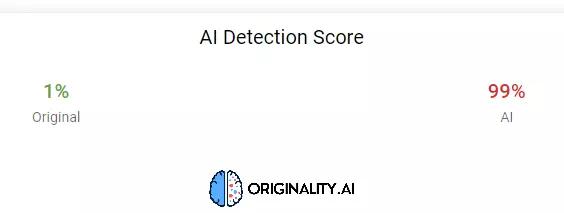

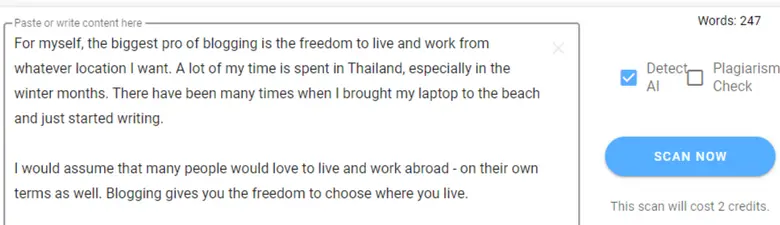

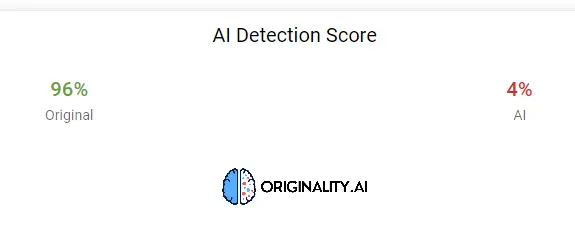

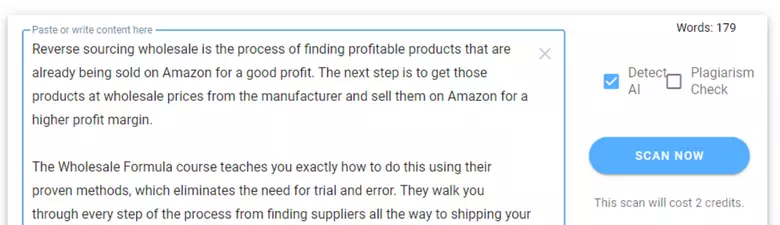

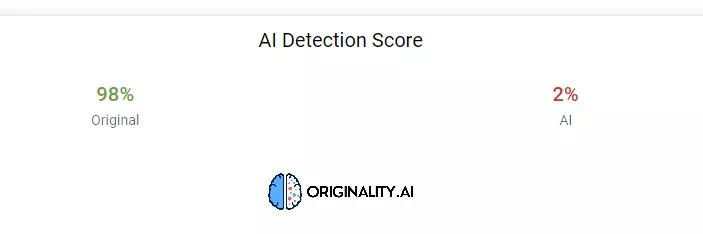

Test #2 - Rewriting The Content Manually

In this test, I will again take the original text generated by ChatGPT in the first test, but will manually make some changes without using any AI tools.

I re=wrote the content in my own words but still using some of the AI content. Here's what I came up with and ready to start this test!

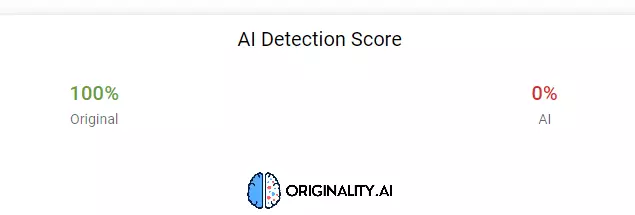

And here are the results:

Both approaches — running content through Jasper's Content Improver and rewriting it manually — got the same result: around 96% original. The takeaway is that you don't need to rewrite everything from scratch. Reworking the AI text paragraph by paragraph is enough to pass detection.

In 2026 the process is more straightforward than it was when I ran those tests. The Deep Scan feature now shows you specifically which passages are flagging and gives you editing guidance inline. You don't have to guess what to change. If you're managing a content workflow that uses AI drafts, Deep Scan reduces the trial-and-error considerably.

How Much Does Originality.ai Cost?

Starting at $30 one-time or $12.95/month, Originality.ai is priced fairly for what it delivers.

There are three tiers:

The Pay-as-you-go plan is a one-time $30 purchase that gives you 3,000 credits, valid for two years. One credit scans 100 words, so 3,000 credits covers 300,000 words of scanning. If you're a solo blogger publishing a handful of posts per month, this is likely all you'll ever need. You can easily get through a year of content without paying again.

The Pro plan runs $12.95/month billed annually (or $14.95 month-to-month) and gives you 2,000 credits per month, 30-day scan history, role-based team access, file uploads, URL scanning, and access to the Chrome extension. This is the right tier for anyone managing a small content team or running scans across multiple sites regularly.

The Enterprise plan is $136.58/month billed annually and is built for agencies and larger publishers. You get 15,000 monthly credits covering 1.5 million words, API access, 365-day scan history, priority support, and unlimited team seats. If you're running a content operation at any kind of scale, this is what makes sense.

One thing to know: combined AI + plagiarism scans cost 2 credits per 100 words instead of 1, so if you're running dual scans on everything your usage will be roughly double what you'd expect.

I think the pay-as-you-go option makes this an easy decision for most people. At $0.01 per 100 words, scanning a 2,000-word article costs 20 cents. The barrier to entry is low enough that you can test it properly without committing to anything.

What Are the Pros and Cons of Originality.ai?

Pros

The accuracy is genuinely strong. Originality.ai has been validated by multiple third-party studies as the most accurate AI detector in its category, and it's the only major detector I know of that was purpose-built for web content rather than academic writing. That distinction matters for publishers. It also detects paraphrased AI content more reliably than competitors like GPTZero — content that's been run through a paraphrasing tool is harder to flag, and Originality.ai handles it better.

The feature set has grown into something well beyond what I originally reviewed. Deep Scan, the Site Scanner, Writer Replay via the Chrome extension, the Content Optimizer with GEO suggestions — these additions turn it from a single-use detection tool into something that fits into a broader content quality workflow. I feel like the direction the team has taken this is genuinely aligned with what serious publishers actually need.

Pricing is fair. The pay-as-you-go option at $30 is a low-commitment way to evaluate the tool properly, and the Pro subscription at $12.95/month is reasonable for the full feature set. The founder is also unusually transparent about limitations and publishes their accuracy research publicly, which builds real trust in a space full of inflated claims.

Cons

False positives are the main issue, and they're worth taking seriously if you're using the tool to evaluate freelancers. My testing found one human-written piece score as low as 80% original — fully my content, no AI involved. The overall false positive rate is lower than most competitors, but it still means you shouldn't use any single score as a final verdict on someone's work. Use it as a screening signal, not a courtroom.

There's no meaningful free plan. You can get 50 free credits by installing the Chrome extension, which is enough for a handful of short scans but not enough to evaluate the tool under real conditions. For a paid tool, a more generous trial would lower the friction for new users.

Monthly subscription credits expire at the end of each month if unused. If your content volume varies — busy months followed by slow ones — the pay-as-you-go pack may be a better fit than the recurring subscription.

Who Should Use Originality.ai?

Anyone publishing AI-assisted content on a site that depends on search rankings.

If you're using AI to write first drafts and editing before publishing, Originality.ai tells you where the editing wasn't enough. If you're accepting content from freelancers, it gives you a screening layer before anything goes live. If you're running a content site that's been through a Google algorithm hit and want to audit your existing pages, the site scanner is genuinely useful for that.

I think it's the right tool for affiliate marketers, SEO content site owners, and content agencies specifically. These are the people most exposed to algorithm risk from AI content and most likely to be working at a volume where manual judgment on every single piece isn't realistic. If you want to learn more about building the kind of AI-driven content operation where a tool like this fits in, I've written about that in my guide on how to make money with AI.

It's less suited for students or academic settings — Turnitin is the institutional standard there and it's trusted in ways Originality.ai isn't positioned to be. And if you only publish occasionally, just grab the pay-as-you-go pack rather than committing to a subscription.

What Are the Best Alternatives to Originality.ai?

GPTZero and Copyleaks are the two most relevant alternatives.

GPTZero is the most widely used alternative. It has a more generous free tier and performs reasonably well on AI detection, though independent testing has found its false positive rate is higher than Originality.ai's. If cost is the primary concern and you don't need plagiarism checking, it's worth evaluating. But for publishers who care about accuracy and want both detection and plagiarism in one place, I think Originality.ai is the stronger choice.

Copyleaks is solid on plagiarism and has AI detection built in. It's a reasonable alternative if you're already in their ecosystem or need enterprise-grade API integration — their API has been around longer and is more mature for large-scale use. For a casual publisher, though, there's no reason to choose it over Originality.ai.

Winston AI targets publishers specifically but has been found to have a higher false positive rate in independent comparisons. Worth knowing it exists, but I'd try Originality.ai first.

Turnitin is the academic gold standard but it's institution-only and not available for independent publishers to sign up for. Not a practical option for most people reading this review.

Originality.AI Updates

I am glad to see that the team at Originality.AI are working on making this software even better. Since I wrote this review, they have added some updates and I will include the most important ones here.

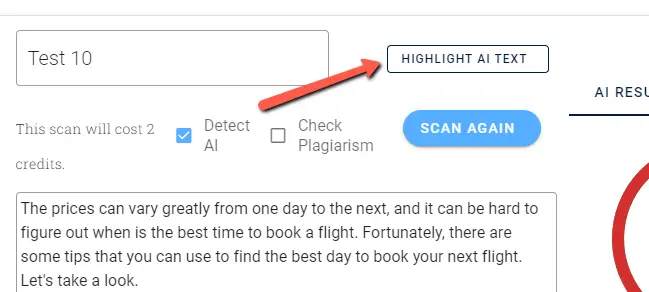

Update #1 - Highlight Suspected AI Content

When you run an AI detection test you now have an option to click on "Highlight AI Text". This will allow you to see which content in the text is likely AI written and what is not.

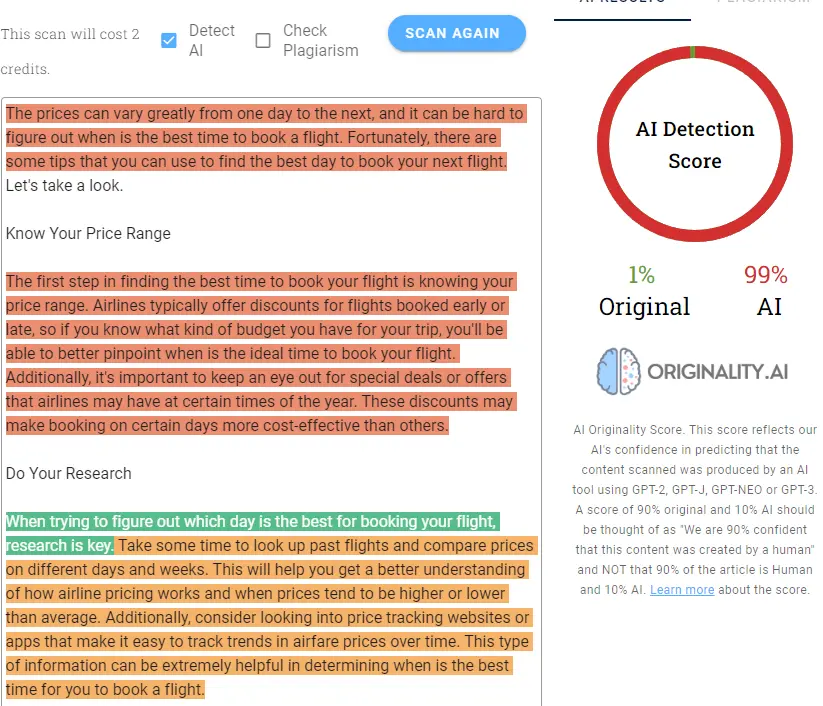

I did another test to see how it performs using 100% AI generated text.

As you can see, it detected the text to be 99% AI text which is highlighted in red and orange. The green section is still AI written text but it is counting it as original. However, for text that is 100% AI written, this has highlighted the AI text accurately.

Update #2 - Detect Paraphrased Content

Since AI can easily rewrite an article, the development team at Originality.Ai has now been able to add detection for paraphased content.

This is especially great for teachers and instructors when checking their student's work. I suspect AI detection is going to be something very commonly used in education!

Conclusion — Is Originality.ai Worth It?

Yes. If you're publishing content on a site that depends on Google traffic — especially if you're using any AI in the process — Originality.ai is one of the smarter investments you can make in your content operation.

The core detection is accurate, the pricing is reasonable, and the additional features like Deep Scan and the Site Scanner add real value beyond just checking incoming content. I've followed this tool since it launched and I've seen it grow into something considerably more capable than what I originally tested.

The false positive issue keeps it from being a perfect score, and a more generous free trial would help new users evaluate it with less friction. But at $30 to get started with no subscription commitment, the barrier to entry is low enough that there's no reason not to try it.

Frequently Asked Questions

Is Originality.ai accurate?

Yes — it's one of the most accurate AI detectors available for web publishers. Independent third-party studies have validated it as the top-performing detector across multiple AI models. Its false positive rate is around 5%, which is lower than most competitors. That said, no detector is 100% accurate and it should always be used as a screening tool rather than a final verdict.

Does Originality.ai offer a free trial?

There's no traditional free trial, but you can get 50 free credits by installing the Chrome extension from the Chrome Web Store. That covers roughly 5,000 words of scanning — enough for a few test runs. The cheapest paid entry point is the $30 pay-as-you-go credit pack, which gives you 3,000 credits and is valid for two years.

Can Originality.ai detect paraphrased AI content?

Yes. Originality.ai added paraphrase detection early on and has continued improving it. Content that's been run through a tool like QuillBot is harder to flag — most detectors struggle with it. Originality.ai handles paraphrased AI content better than GPTZero or Winston AI in current testing.

Does Google penalize AI content?

Google's March 2024 core update specifically targeted AI spam sites, and their public guidance makes clear that scaled, low-quality AI content is a target. The distinction Google draws is between helpful, well-edited content (which can use AI) and lazy AI output published at scale (which it will penalize). If you're publishing unedited AI content, the risk is real. I'd rather know what my score is before it goes live.

What is Deep Scan in Originality.ai?

Deep Scan is a 2025 feature that combines AI detection with editing guidance. Instead of just flagging content as AI-written, it explains why specific passages are flagging and gives you concrete suggestions for how to rewrite them to sound more natural. It's essentially a detection tool and writing tutor in one — useful for anyone using AI drafts that need editing before publishing.

How much does Originality.ai cost?

Three options: a $30 one-time pay-as-you-go pack (3,000 credits, 2-year expiry), a Pro subscription at $12.95/month billed annually (2,000 monthly credits, 30-day scan history, team management, Chrome extension), and an Enterprise plan at $136.58/month billed annually (15,000 monthly credits, API access, 365-day history, priority support).